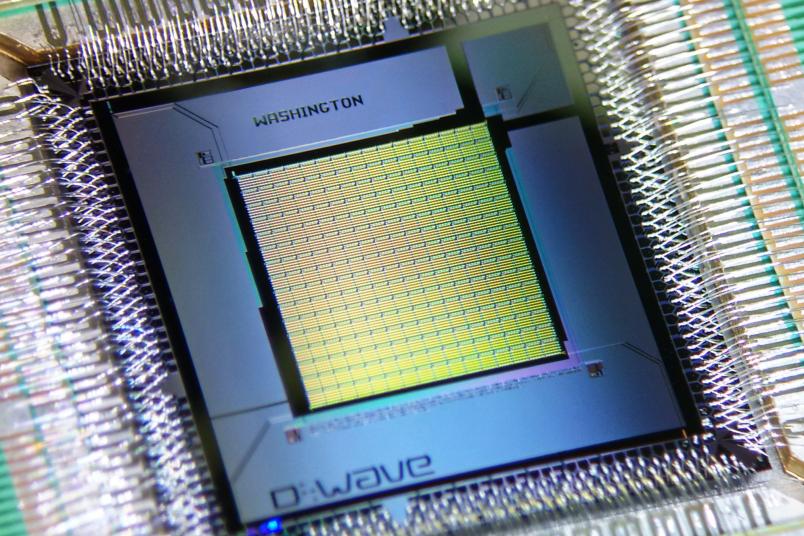

Quantum computers

Future-proof encryption methods

Even though a quantum computer does, as yet, remain pure theory, IT experts are able to determine how easily it could break common encryptions. They deploy a trick familiar used in logics.

While searching for encryption algorithms that not even a powerful quantum computer would be able to break, Prof Dr Alexander May studies so-called hard mathematical problems. Hard mathematical problems are calculations for which no efficient algorithms exist; i.e. it takes a long time for a computer to solve them if confronted with large data volumes. Factorising a large number, for example, poses such a problem.

At first glance, hard mathematical problems are not necessarily connected to encryption algorithms. However, IT researchers use them as aids: they create a link between a certain encoding method and a problem. This is how they can figure out how difficult it would be to break the algorithm.

This is how we can figure out how difficult it would be to actually break a code.

Alexander May

In theoretical computer science, researchers are interested in the number of steps an algorithm has to perform in order to complete a calculation. They usually cannot determine the precise number, but they can assess a ballpark figure of the necessary number of steps – whether it would be 10, 100 or 1,000, for example.

“We now link the number of calculation steps that an algorithm requires for solving a hard problem with the number of calculation steps that would be necessary for breaking a code,” explains Alexander May, Professor of Cryptology and IT Security at Ruhr-Universität Bochum. “This is how we can figure out how difficult it would be to actually break a code.”

To this end, he and his colleagues assume that an algorithm exists which breaks an encoding within the specified period of time T, for example in 280 steps – a bit more than a septillion, a number with 25 digits. For the researchers it is irrelevant how exactly that algorithm would work; they merely assume that it does exist. “The algorithm is a black box,” explains May. “Our work is based on the assumption that it exists and that it will break encryptions within the assumed period of time.” Using logical arguments, he and his colleagues deduce how long it would take to solve a hard problem.

“Under normal circumstances, solving the hard problem would take time c·T,” says May. “Our research aims at reducing the factor c as much as possible, in order to ensure that the statements pertaining to the hard problem and to the breaking of the encryption are not too far apart.”

Reversing the argumentation

Once this step has been accomplished, the researchers will have, for example, created a link like this one: if the encryption can be broken in T = 280 steps, the hard problem can be solved in c·T steps. If c = 210, in 290 steps. It should be noted that this statement is purely hypothetical, but the researchers deploy a common trick used in logics: they reverse the argumentation. To this end, they have to negate both statements.

For example, the valid argument “If a dog is healthy, it has four legs” can be reversed; in order to remain valid, the statements have to be negated: “If a dog doesn’t have four legs, it is not healthy.” In the same way, Alexander May reverses the link identified between breaking a code and a hard problem: if the hard problem cannot be solved in 290 steps, the encryption cannot be broken in 280 steps.

The reversed statement applies to all conceivable algorithms.

Alexander May

As May didn’t make use of any properties of the hypothetical code breaking algorithm – apart from its mere existence –, he is certain: “The reversed statement applies to all conceivable algorithms, including those that are yet to be invented.” This approach of shifting a question to another one is called reduction. Alexander May and his colleagues have reduced their question, namely if a code can be easily broken, to a different question, namely how quickly a hard problem can be solved.

IT researchers refer to the subset sum problem as a hard problem: assuming one is examining a list of numbers, is it possible to find a selection of numbers in that list that would add up to precisely zero? If the list is, for example, −9, -3, 1, 4, 5, the answer is yes, because −9, 4 and 5 add up to zero. This example may be simple. But the time necessary to solve it depends on the length of the list. Verifying if a selection of numbers exists in a list that add up to zero thus constitutes a hard problem.

The time required for breaking a code

Alexander May and his colleagues are interested in such problems, because they use them as aids in order to make statements about the security of cryptographic algorithms. Based on the time required for solving a hard problem and using solely logical argumentation, they extrapolate the period of time required for breaking a cryptographic algorithm. It is rarely possible to stipulate the time precisely. However, they are able to identify its extent. “We know how much time an algorithm would need at most,” elaborates Alexander May. “But we don’t know if the process could be performed faster.”

The challenge faced by the researchers is narrowing down the scope of the actual running time. A standard programme requires n·2n calculation steps for a subset sum problem. May has established that it could be performed much more quickly, namely in approx. 20,3·n calculation steps – an enormous reduction.

These novel encryption methods will be secure even once the quantum computer exists.

Alexander May

The subset sum problem is of great interest to him, because it would probably also constitute a tough nut for a quantum computer. “Factorisation, on which almost the entire common encryption is based, can be performed by a quantum computer within a short period of time. We know this, even though such a computer does not yet exist,” says May.

However, even for a quantum computer, the subset sum problem would mean time-consuming calculations. This is why Alexander May designs encryption algorithms that he links with the subset sum problem using logical argumentation. “These novel encryption methods will be secure even once the quantum computer exists,” he says.